I discovered this morning that Google isseems to be cloaking results for some users based on either your browser ID user agent or possibly based on the extensions you are running in Firefox.

Like many search marketers, I frequently use Google custom queries. By far, the most common query I use is the site: command which shows the pages from a particular domain or folder within a domain. While preparing for SMX next week, I have been researching the effect of pagination and trying to demonstrate whether or not paginated pages are in the index.

I am doing a case study for my clients, GreatSchools.org, examining their San Francisco Preschools ratings. In order to see if any of the paginated pages are in the index, I search for “site:www.greatschools.org/ inurl:p=2”

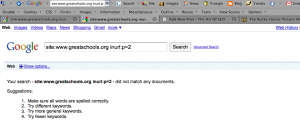

When I run this search using Firefox, I get the following results:

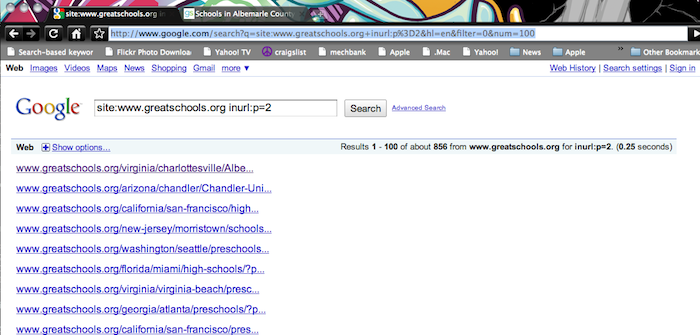

When I repeat this query on Chrome, I see VERY different results. So, it looks like Google is cloaking results for Firefox Users.

Update: 11:11 AM: The is some debate about whether changing content based on a browser user agent detection is really cloaking. I may be mixing my euphemisms, but I wouldn’t want to be making that distinction in a Google re-inclusion request. The impact of this is on regular users is slight virtually non-existent, but it looks like if this is not an isolated bug, professional SEO’s are going to have to start using Chrome on occasion (the good news is that Safari seems unaffected). If anyone wants to test this with a clean version of Firefox that doesn’t have any SEO extensions, let me know what you get. Maybe this is only because I have the SEOBook Toolbar and/or SEO For Firefox installed.

Update 2:01 PM: Commenters are reporting that some people confirm my results while others are seeing the same thing in FireFox and Chrome. This leads to an open debate about whether they are “personalizing” Site: results, showing different results based on IP/browse history, etc., or just screwing with me because I am a “known” SEO.

Updated 3/3/10: Confirmed that this is not a personalization issue with Byran Hordling, lead engineer on Google Personalization.

Update: 2/27/10, 1:30 PM. Michael VanDeMar pointed out that the pagination pages are being excluded by the Robots.txt. This explains the lack of metadata being shown, but does nothing to address why Chrome & Safari show different results from IE.

In both IE and Firefox, I see the same results as you do in Chrome. I have Aaron’s SEO Toolbar installed, and tried it with it turned on and it turned off. I also tried while being logged in to my Google account and being logged out. Never could get the results you saw in Firefox 🙁

Looks like a good firefox extension would be a something that gave a fake User-Agent of chrome instead of firefox. If Google wants to play the game that way…

You could install Firefox portable to a pen drive, but AFAIK it is widely known that google will vary the resultset based on an user’s IP, geolocation, time of the day AND browser used.

http://portableapps.com/apps/internet/firefox_portable

coming to think of it, it might even vary based on cookies for previous searches (for non logged in users, i.e., aside from personalized results) and browser language-accept order.

Tested using Firfox 3.5.8 with the both the SEOBook and SEOmoz tool bars (at different times). I used your exact search (with cut and paste) and received a single entry. I got full results after having to click “repeat the search with the omitted results included” link.

Forgot to mention, it worked exactly the same way with Chrome for me.

I am using Firefox, and that query returns 279 results for me.

There is also the possibility that different browsers wind up hitting different datacenters, each which returns a different result for whatever reason.

Additionally, if you are wondering why when you see the results you are seeing urls only, it is probably due to the fact that the individual school paginated (or any school page with url parameters) is blocked in robots.txt. This line, for instance:

Disallow: /*/*/*schools/?*

matches this url (along with all of the others I looked at):

/virginia/charlottesville/Albemarle-County-Public-Schools/schools/?p=2&pageSize=25

Some datacenters might simply be excluding results blocked by robots.txt altogether, instead of showing the url by itself.

John & Shane

Thanks for the testing and the data. I have a couple of independent confirmations and a couple of people not seeing this. I have been seeing the same results for a while, logged in, not logged in, cookies deleted, etc. I guess I have been profiled as an SEO and Google is just messing with me…

Try GoogleCamo extension and see what happens.. not the greatest extension but maybe relevant here.

I’m not so surprised at Google cheating, but I am surprised they allow themselves to be caught. It’s not the results that matter, it’s the impact of showing results.

If Google Chrome delivers a better experience (conversion wise) for the Google searching public, Google has yet another potential PR nightmare on its hands as it defends the concept of showing specific results only to specific audiences (grouped by their adoption of Google’s products), or as an anti-competitive practice against IE/FF.

Sad that with all those resources and all that granted authority, Google still feels the need to cheat.

At some point, racketeering is racketeering, regardless of whether it’s carried out by computer algorithm or street thugs.

Thanks for pointing out the robots.txt. I didn’t notice they added that exclusion.

So far, Florida respondents are seeing 279 while I get 856 in California using Chrome and nothing using FF on a Mac. Confirmed seeing nothing with a couple other SEOs here in the Bay Area.

John

Camo doesn’t make any difference. I suppose I could try to bounce my PPOE to grab a new IP address, but I almost don’t care how Google is doing this, only the fact that they are clearly showing very different results. Sadly, as Michael VanDeMar pointed out, it looks like my client added in a disallow to robots.txt in an attempt to knock out something else, so the actual pagination pages I was interested in are deliberately not in the index…. ;(

Jonah, try using a hosts file to see what various datacenters return. These are all active:

64.233.189.161

66.102.9.147

74.125.47.103 (<- this is the one I am hitting atm)

You have to use a hosts file since Google switched to AJAX queries, or you'll just end up back at whatever Google.com ip you were hitting before.

Also, you could try the query in different country extensions, such as Google.co.uk or Google.ca, and see if they give you any better results. Even if they do show, however, you won't actually see anything other than the urls. I didn't examine the robots.txt closely, but it is rather large. Since the asterisk matches anything you might want to have your client review it closely, prehaps run some pages through the Google Webmaster Tools robots.txt tool against various pages just to be safe.

Logged in my Google account on Safari for Mac I got the same results as you got in Chrome. Not the sames results on Firefox 3.6 still logged. I bet this is a server thing. The client definitely needs to hide a lot of stuff tho’, nice find @michael

Sam

In this case, the client is super squeaky clean white hat (all of my clients are) and graciously allowed me to feature them in a case study for SMX. This side show wasn’t originally intended to be about them, but I hope enough of my colleagues link to the post and my clients site to make up for the exposure.

I Googled the same term from Hamilton, New Zealand and got 1 result initially and 662 with omitted results included. I have switched off personalized search as a general practice so my browsing history would not be a factor (not that I’ve ever done this or a similar search, so personalized search wouldn’t be an issue anyway).

Are you using FireFox, IE, Safari or Chrome? Do you see the same results from each one?

Opting in and out of search personalization is a pain in the ass. If you’re looking for a Firefox extension to always block Google’s personalized search, we recently developed Google Camo, which automatically updates your account settings to opt out of personalized search. It also has a handy, color-coded icon that alerts you any time you may be opted back in without realizing it.

You can download it here: http://www.iexposure.com/googlecamo

@john andrews Thanks for mentioning Google Camo. We’re working out the kinks with the Firefox extension and will have a “great” one up and running soon!

Hello there. To my surprise I experienced something similar to what you describe. And i googled for it, and I bumbed into your site!

So the same operator site:www.mysite.com showed more pages indexed in Firefox and IE, and less in Chrome (haven’t tested elsewhere so far)

I got puzzled as I got the opposite from what you describe. Less results in Chrome and more in Firefox.

So after reading your post and your comments, I disabled the SEOquake extension in Firefox. Same results. But I also disabled the ChromeSEo extension and THAT’S when I got the same results in all three browsers.

So in mycase, my result is that the ChromeSEO extension shows less results when you use the site:www.mysite.com operator.

oh, and of course I logged out from gmail etc.

Hope it helps 🙂

Cheers!

There is more news today…http://twitter.com/cafulcura/status/11486650237